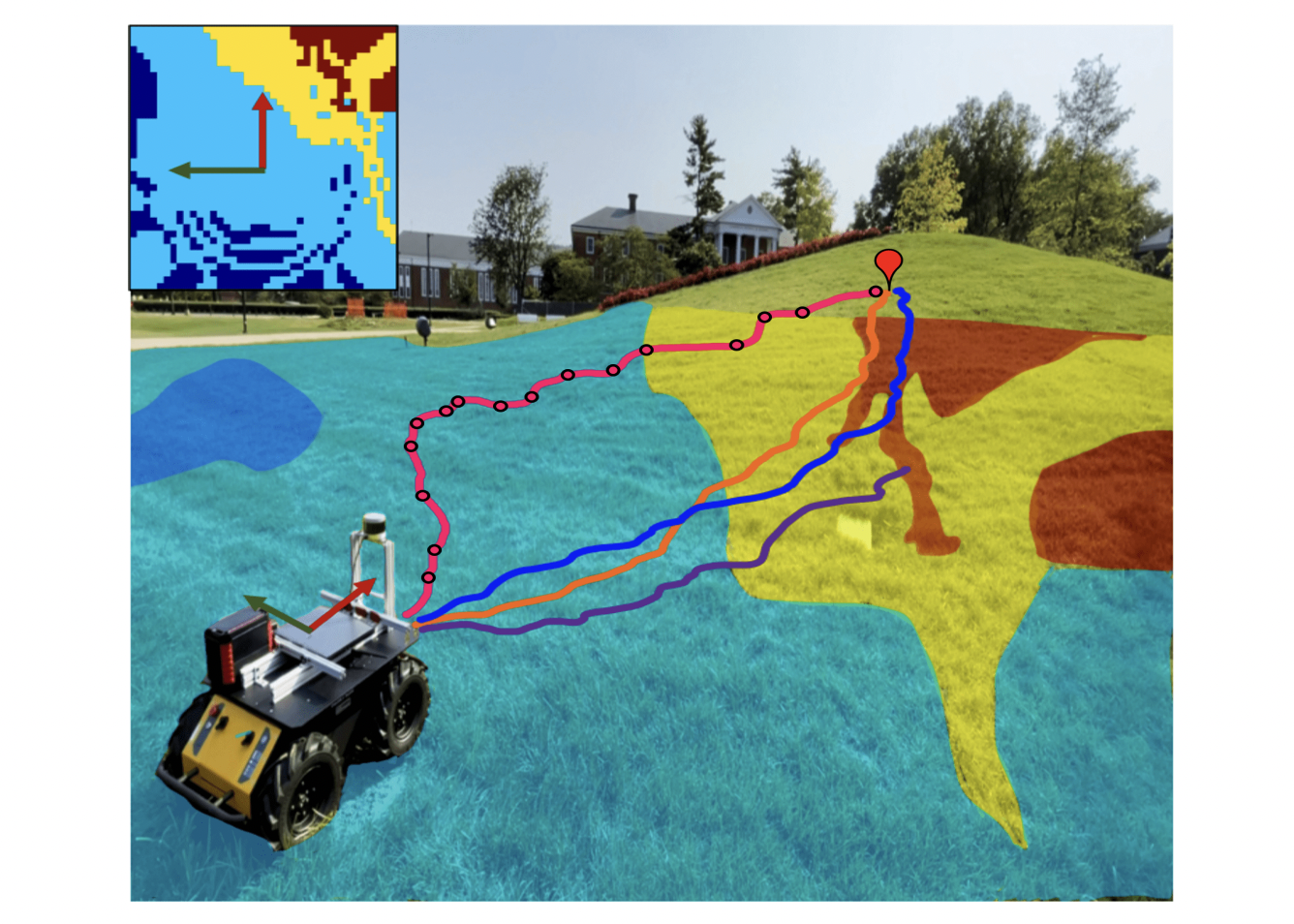

| Learning to navigate in an unknown environment is a crucial capability of mobile robot. Conventional method for robot navigation consists of three steps, involving localization, map building and path planning. However, most of the conventional navigation methods rely on obstacle map, and dont have the ability of autonomous learning. In contrast to the traditional approach, we propose an end-to-end approach in this paper using deep reinforcement learning for the navigation of mobile robots in an unknown environment. Based on dueling network architectures for deep reinforcement learning (Dueling DQN) and deep reinforcement learning with double q learning (Double DQN), a dueling architecture based double deep q network (D3QN) is adapted in this paper. Through D3QN algorithm, mobile robot can learn the environment knowledge gradually through its wonder and learn to navigate to the target destination autonomous with an RGB-D camera only. The experiment results show that mobile robot can reach to the desired targets without colliding with any obstacles. |

Sale!

SP110-Mobile Robot Navigation based on Deep Reinforcement Learning

₹13,000.00

You must be logged in to post a review.

Contact UsHere's your new discount product tab.

Reviews

There are no reviews yet.